The Intelligence Singularity

What Happens When AI Becomes Better at Foresight Than Governments?

Is Intelligence Leaving Human Hands?

“AI systems are increasingly making decisions that humans used to make, and doing so at speeds and scales we cannot match.”

- Eric Schmidt, 2021 (Chair, U.S. National Security Commission on AI)

There is a moment every intelligence professional dreads - the moment when you realise the system cannot respond fast enough.

I’ve seen this in military operations rooms, in banking intelligence centres, in national crime strategies, and in command-and-control environments. A threat emerges, the indicators are there, and yet the institution is simply too slow, too fragmented, or too distracted to act.

It’s called inertia, and it is most often related to a lack of real-time intelligence, slow planning cycles, risk avoidance, lack of capability, or lack of a sense of urgency (or a combination of all these). Let’s look at a few examples:

September 1985. Oshakti, Namibia. I’m stationed at 310 AFCP (Air Force Command Post). An enemy convoy is about to leave from Xangongo to Ongiva in southern Angola. HF intercept time - 07:50 (Xangongo informing Ongiva that the convoy is about to leave). HF intercept receipt time at 310 AFCP - 08:10. The distance between the two towns is 95 km. It is assumed the convoy leaves at 08:15 (nothing ever happens on time with these guys). Travelling time at 60 km/h is one hour and 35 minutes to get from one town to the other. The OC must be briefed during a short operational planning cycle and approvals obtained. Time elapsed - 20 mins. Aircrew must be briefed at AFB Ondangwa, 40 km away. I brief the IO there via telephone as to the situation, who then briefs the Imp crew who have already been scrambled. Time after briefing is 08:50. The Imps take off at 09:10. Flying speed - 295 knots. The distance to the likely intercept point on the road is 150 km. Flying time - roughly 18 minutes. TOT - 09:28. Just too late. The convoy did actually leave on time…

Early March 2003. The SABRIC (SA Banking Risk Information Centre) intelligence cell. We sit down for our weekly hotspot meeting with the bank security reps, crime intelligence rep from the police, and various other industry crime-fighting reps. Every week, after the meeting, a hotspot report is generated that gets sent to everyone involved. The report provides a short overview of suspicious activities in various areas, predominantly with the aim of preventing armed robberies at banks or retail centres. Halfway through the meeting, it becomes clear that there have been numerous suspicious vehicle sightings in the vicinity of Bank A during the past week. The information is obtained from the Bank A rep, as well as from the rep of the retail business council of that specific area. The number plates turn out to be false. An immediate red flag. The police intelligence member takes out his phone to inform his colleagues. My phone rings. Bank A has just been hit.

Early 2011. The Pearl Qatar island, just off the coast of Doha, Qatar. I sit in my chair in the command centre, looking at the large video wall in front of me. The setup is organised in rows. In the front, down below, are the call centre agents. Behind them, one level up, are the security and facilities dispatchers. Then, another level higher, I sit with my supervisors. I scan the wall again. Something just does not feel right today, but I cannot put my finger on it. Then it hits me - there is no traffic movement at the Viva Bahria roundabout. As I turn to the call centre supervisor to hear whether there have been any calls to this effect, my phone rings. It is the CEO. Furious. “I’m getting complaints from the residents in Viva Bahria that there is a water leak in the road, and they cannot get out of the precinct! What is going on?” I sigh. Just another day in paradise…

With the luxury of hindsight, there are many points of failure in the above scenarios (all real, by the way). It comes down to a lack of situational awareness, resulting in sub-optimal sensemaking and hence, decision-making. This is the decision loop in CAS (Complex Adaptive Systems) - Information informs situational awareness that informs sensemaking that, in turn, informs decisionmaking.

But in an analogue and early digital era, we did what we could. What is different now is that AI sees the threat long before any human does. We are entering a world where:

AI reads more data (and information and intelligence, just to be precise) than all intelligence agencies combined.

AI integrates signals across borders instantly - geographic borders, industry borders, and cognitive borders.

AI generates thousands of scenarios in minutes. I wrote about this in my last blog, but more about it later on.

AI detects group formation, crowd shifts, economic instability, crime patterns, and political agitation - before agencies even meet to discuss them. The point is, of course, that it must KNOW to look for these.

This is the threshold of what I call:

The Intelligence Singularity - the tipping point where artificial intelligence becomes better at foresight, sensemaking, and risk anticipation than governments, corporations, or traditional intelligence communities.

The implications are profound, unavoidable, and perhaps even uncomfortable.

Intelligence Was Designed for a World That No Longer Exists

“Now, the reason the enlightened prince and the wise general conquer the enemy whenever they move and their achievements surpass those of ordinary men is foreknowledge.

- Sun Tzu, est 500 BC (The Art of War)

Traditional intelligence - military, policing, corporate, geopolitical - relies on a model created centuries ago (there are various iterations on the intelligence cycle, depending on the industry):

Requirements - the intelligence problem.

Collection - based on a collection plan.

Processing - includes matters like evaluation and collation.

Analysis - connecting the dots and producing the product.

Dissemination - in whatever form may be required.

This worked when:

Information was scarce (no or little access to OSINT).

Threats moved slowly (also because the flow of information was slow).

Borders mattered. Real borders, not virtual ones.

Institutions had near-monopolies on surveillance and analysis.

Humans could “keep up.”

None of this holds true anymore. Today’s threat environment is rapid, decentralised (networked), digital, and interconnected at many levels. To illustrate this:

SOC (Serious Organised Crime) syndicates and terrorist organisations operate like multinational corporations. We are all familiar with SaaS (Software as a Service). But the new typology in this environment is characterised by concepts like VaaS (Violence as a Service) and CaaS (Crime as a Service).

Disinformation travels faster than the truth can be verified. The future CI (Counter Intelligence) specialist will be one with a degree in IT.

Economic instability, climate shocks, social movements, and political unrest (polycrisis) now cascade globally. Everything operates as a system of systems - CAS in action.

Into this almost dystopian world, enters AI - and suddenly the game changes.

AI Has Quietly Become the World’s First Global Intelligence System

“Artificial intelligence is developing into an autonomous actor that does not need the permission or the cooperation of human beings - or even of governments - to influence the world.”

- Yuval Noah Harari, 2023

Borders, jurisdictions, or bureaucracies do not bind AI. It can ingest and analyse:

News flows, including leading news channels, social media, and even flows on the Dark Web.

Social signals. One of the first signals of a pending rise in social unrest is an uptick in social media chatter.

Supply-chain disruptions. The one development that is in the mainstream media at present is the matter of Russian oil exports. On the surface, the players and their agendas are quite clear. But believe you me, there are strong undercurrents at play that the average person on the street is not privy to. Just one example - on 27 November 2025, a Turkish tanker transporting Russian oil was attacked by (reportedly) a Ukrainian device of sorts. But Turkey is, at least ostensibly, on the side of Ukraine, even providing them with drones. What’s really going on?

I’m not going into each of these in detail, but the same holds true for matters related to crime statistics, financial movements, public sentiment, cross-border data, satellite imagery, environmental indicators, and market anomalies.

AI processes all of this in milliseconds. So, for the first time in human history, intelligence capability is not controlled (only) by states.

AI is becoming the world’s first decentralised, global intelligence capability - operating above nations, across industries, and faster than anyone can react.

This is the heart of the Intelligence Singularity.

And to me personally, the irony is so thick you can cut it with a knife! I previously related this story in a blog, but briefly - in about 2004, soon after the establishment of SABRIC (then known as the Banking Risk Intelligence Centre, and not Information Centre), as it became subsequently known, my boss and I, as the GM for Crime Risk Intelligence, were called in to see the then Minister for Intelligence Services. We got a dressing down for having the gall to establish a “private sector intelligence capability.” Even then, I marveled at the arrogance of the state, thinking that it had the sole mandate on the concept of intelligence. Anyhow, true to form, the banks tripped over themselves in their hurry to change the name.

Today? Private sector intelligence organisations are sprouting so rapidly that it is difficult to keep track.

Early Warning Will Never Be the Same Again

“We are collecting more data than we can analyse, more signals than we can interpret, and more indicators than we can act upon. The warning problem is no longer collection - it is understanding.”

- Michael Hayden, 2016

AI is already outperforming human analysts in:

Weak-signal detection

AI can detect contradictions long before they become trends - something intelligence analysts have always battled to do. You have to first identify some signposts for the variable that you are monitoring. Then you have to identify relevant tripwires that will reveal a statistically significant response if crossed…and by then, your coffee is cold.

Pattern recognition

This is closely linked to the previous point, but where analysts see random noise, AI sees structure. We tend to need a great deal of such “random noise” before we can start connecting the dots.

Scenario generation

AI generates dozens of plausible futures, recalculates them continuously, and highlights early indicators that should trigger escalation - more about this in a breakthrough analysis further on.

Cross-impact analysis

AI can link the following faster than any fusion centre in existence (only as examples). Normally, this would be done in a very laborious fashion using some sort of regression analysis to determine probabilities:

Crime - economy - politics - infrastructure - social stability.

Telegram chatter - weapon flows - money flows - attacks.

Cyber events - financial flows - disinformation campaigns.

Behavioural analysis

AI can detect mobilisation, radicalisation, sentiment shifts, financial stressors, crowd movement, and online discussions in real time. For intelligence services, police agencies, and corporate security teams - this is a great assist.

Governments Are Not Ready - and That Is the Real Crisis

“Our institutions are not designed for a world in which technology evolves faster than the political systems meant to regulate it.”

- Henry Kissinger, 2018

The Intelligence Singularity shows up weaknesses in bureaucratic structures:

Institutions move too slowly. AI can recalculate a risk probability 100 times in the time it takes for a risk management committee to mull it over.

Governments operate in silos. AI is integrated, a trait not characteristic of humans.

Data governance is lagging behind. Governance, and for that matter, the law, treats data as something belonging to an institution (and thinking back to my days in military intelligence, one built one’s reputation proudly by being able to pronounce “my pile (of intel) is bigger than yours!”). AI treats data as if it belongs to everyone, something the world produces continuously. It has no time for parochialism.

Political structures respond to events; AI responds to signals. This mismatch is the cause of most (future) governance failures.

Intelligence organisations were built for secrecy. AI not - it was built for scale, and this is a fundamental contradiction that creates asymmetry between private sector AI and state intelligence.

Crime and Terrorism Will Change Dramatically

“Technology doesn’t just change the tools of crime - it changes the very structure of criminal activity.”

- Bruce Schneier, 2019

The basic logic, the fundamental premise, that underpins how intelligence analysts think, needs to be influenced by the following:

Organised crime. AI can detect SOC patterns long before law enforcement can, but criminals will use AI to hide in the noise.

Terrorism. AI can detect indicators for mobilisation and planning far earlier, but extremists will use AI to plan, recruit, and coordinate.

Cybercrime. AI enables both a hyper-defensive posture and a hyper-offensive malware.

Money laundering. Machine learning can detect structuring, smurfing, mule patterns, and shadow banking behaviours rapidly. Traditional policing and intelligence structures simply cannot match this pace.

The future of crime-fighting will be a race between AI used to do bad, and AI used to do good.

Democracy Faces an Uncomfortable Question

“Once artificial intelligence knows you better than you know yourself, democracy will find it increasingly difficult to survive.”

- Yuval Noah Harari, 2018

If AI then can:

Detect sentiment in voter patterns.

Map political polarisation.

Forecast civil unrest.

Amplify or suppress narratives.

Predict election outcomes with alarming accuracy.

This raises a question that every democracy will have to confront:

What happens when AI can predict political outcomes better than politicians, and influence them faster than electoral systems can regulate?

This is one of the central governance dilemmas of the Intelligence Singularity.

The Future Intelligence Officer Will Be Human–AI Hybrid

“The future of intelligence analysis will be a partnership in which humans and machines work side by side, each compensating for the other’s limitations.”

- Lt. Gen. Jack Shanahan, 2020

In my career, I moved intelligence from analogue to digital, from tactical to strategic, from siloed to integrated. Now the shift is even bigger. The intelligence officer of the next decade will be:

An AI conductor (getting to know ML will not even be an option).

A systems thinker (so that, together with AI, she can identify opponent CoGs).

A strategic interpreter (reduce the noise, connect the real dots, give the correct advice).

A decision integrator (understand how OODA, situational awareness, PDCA, and DMAIC can influence decision outcomes, so as to cut to the chase).

A scenario architect (understand how this is integrated into strategic planning and how AI can assist).

An early-warning designer (redesign the intelligence singularity to include new types of signposts and tripwires - with the assistance of AI).

Humans will continue to do what they do best, namely, interpret, explain, infer intent, sense political nuance, apply ethics, make meaning (sense), and shape strategy.

AI will do everything else. This hybrid model is inevitable. Let us illustrate this practically by means of a model derived from what I’ve said above and from my previous article on Scenario Planning 3.0.

Scenario Planning 3.0

“You cannot count on a single future anymore; leaders must build strategies that thrive across multiple possible futures.”

- Rita McGrath, 2019

I consider Scenario Planning 3.0 as the new operating system of the intelligence singularity. The reason organisations still fail - despite oceans of data - is that they lack a framework to interpret the future. That is why Scenario Planning 3.0 is emerging as the only viable strategic discipline for an AI-driven world. It merges machine-generated futures with probabilistic risk forecasting, strategic interpretation, and human governance and leadership.

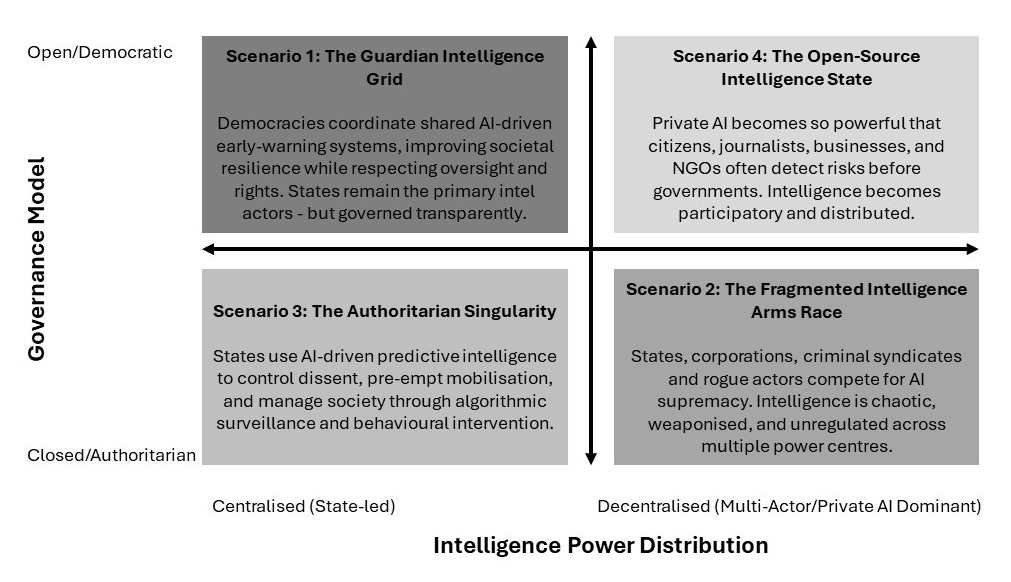

AI, therefore, handles the complexity. Humans handle the judgment. Together, they form the first adaptive strategic-intelligence ecosystem capable of operating at the speed of 21st-century risks within Complex Adaptive Systems. To illustrate this, I posit four plausible futures; four high-level scenarios - the first outputs of an Intelligence Singularity thought experiment.

Firstly, I selected two axes to explain the system, not just label it.

Axis 1: The Governance Model, which captures how political systems choose to use AI-powered intelligence:

Open/Democratic. Transparency, oversight, rights, distributed intelligence.

Closed/Authoritarian. Centralised control, coercive surveillance, pre-emptive suppression.

Axis 2: Intelligence Power Distribution, which captures who controls advanced foresight capability:

Centralised. The state maintains the dominant intelligence capability.

Decentralised. Private AI, corporations, citizens, and networks possess equal or greater foresight power than governments.

Mapping these scenarios results in the following scenario grid:

I believe these axes and scenarios work because they reflect the real structural forces shaping AI and intelligence. Political governance determines how intelligence is used. The distribution of capability determines who controls the future.

They, furthermore, separate the scenarios cleanly and meaningfully. Each quadrant now represents a unique, logically coherent world, not just a narrative variation.

Finally, they show the deep tensions of the Intelligence Singularity, namely:

Democracy vs control.

Public good vs private power.

Regulation vs chaos.

Oversight vs optimisation.

It should be obvious from the scenario grid that none of these scenarios comes without risk. This is where the human intervention is important. Depending on which quadrant you want to play in, as a country, organisation, or law enforcement entity, you have to strategise for it. So yes - you can strategise for a preferred scenario. But remember, your grand strategy should be robust enough to cater to all four scenarios!

Conclusion

“We are entering an era of accelerating returns, where change will be so rapid that the past will cease to be a reliable guide to the future.”

- Ray Kurzweil, 2005

The Intelligence Singularity is not the future - it is the threshold into the future we are crossing. This shift will be uncomfortable for institutions built in slower eras. But it is full of opportunity for leaders, governments, and organisations willing to adapt.

The central truth of the Intelligence Singularity is this:

Intelligence is no longer the property of those who can collect the most, or who sits on the biggest pile of information, but it belongs to those who can interpret AI-driven futures with knowledge and speed (and integrity).

The Intelligence Singularity does not herald the termination of human intelligence. No, it is the beginning of augmented intelligence - where the future becomes visible far sooner, and where the organisations that listen will thrive in ways we are only beginning to imagine.

In fact, the future belongs to those who can judge its approach speed the best…

References

Bostrom, N. (2014) Superintelligence: Paths, Dangers, Strategies. Oxford: Oxford University Press.

Charette, R. (2023) ‘AI and the Future of Intelligence Analysis’, IEEE Spectrum. Available at: https://spectrum.ieee.org

Clarke, R. and Knake, R. (2019) The Fifth Domain: Defending Our Country, Our Companies, and Ourselves in the Age of Cyber Threats. New York: Penguin.

Cukier, K. & Mayer-Schönberger, V. (2013) Big Data: A Revolution That Will Transform How We Live, Work and Think. London: John Murray.

Halevy, A., Norvig, P. and Pereira, F. (2009) ‘The Unreasonable Effectiveness of Data’, IEEE Intelligent Systems, 24(2), pp. 8–12.

Kahneman, D. (2011) Thinking, Fast and Slow. New York: Farrar, Straus & Giroux.

Kott, A. and Alberts, D. (2017) ‘Strategic Intelligence in the Age of AI’, Journal of Defense Modeling and Simulation, 14(3), pp. 193–203.

Kurzweil, R. (2005) The Singularity Is Near: When Humans Transcend Biology. New York: Viking Press.

Makridakis, S. et al. (2018) ‘Forecasting in the Age of Artificial Intelligence’, International Journal of Forecasting, 34(4), pp. 749–760.

Meyer, T. and Kunreuther, H. (2017) The Ostrich Paradox: Why We Underprepare for Disasters. Philadelphia: Wharton Digital Press.

Russell, S. and Norvig, P. (2021) Artificial Intelligence: A Modern Approach. 4th edn. Upper Saddle River: Pearson.

Schwartz, P. (1991) The Art of the Long View: Planning for the Future in an Uncertain World. New York: Doubleday.

Tetlock, P. and Gardner, D. (2015) Superforecasting: The Art and Science of Prediction. New York: Crown.

Zegart, A. (2022) Spies, Lies, and Algorithms: The History and Future of American Intelligence. Princeton: Princeton University Press.

A singularity is a threshold where the old model no longer applies.